Wellesley Public Schools in ‘learning phase’ with AI

Wellesley Public School faculty and administrators have been trying to get their arms around the potential benefits and problems artificial intelligence might bring to the education system since the arrival of ChatGPT in late 2022. But more formal efforts to address AI have sped up since last summer.

Adam Steiner, director of educational technology, shared an update alongside Sandy Trach, assistant superintendent of teaching and learning, about an hour into the March 10 School Committee meeting (see Wellesley Media recording for presentation and discussion).

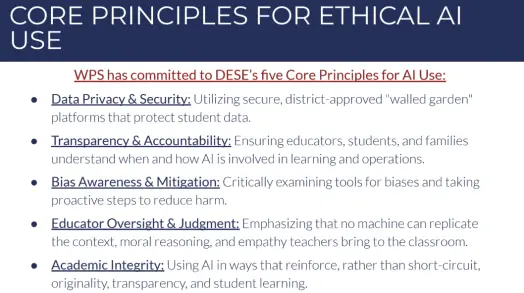

Steiner pointed to draft guidance for using AI in Wellesley Public Schools that was shared with faculty in the fall. That guidance, he said, syncs well with recommendations shared by the Massachusetts Department of Elementary and Secondary Education (DESE). He emphasized AI’s role in supporting but not replacing the work of teachers, and using it in a secure and transparent way.

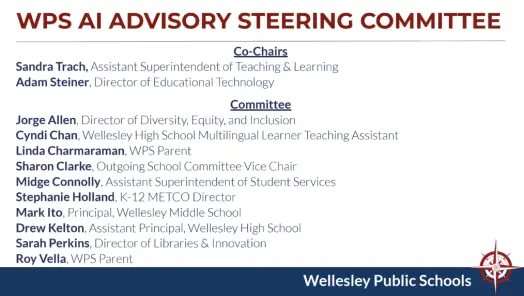

Wellesley Public Schools has formed a district-wide AI Advisory Steering Committee consisting of teachers, administrators, tech experts, and parents, as well as working groups focused on more discrete topics. Students aren’t currently in these groups, but they and their feedback will be brought in down the road for focus groups, etc., the administrators said.

Research by the groups is expected to help inform curriculum and instruction, and be shared with faculty and School Committee members, Trach said. Final recommendations should be ready between May and June, and could find their way into everything from school handbooks to curriculum to professional development. WPS is working with a consultant on its AI strategy as well.

Early findings are that AI has potential for more easily differentiating instruction for students at various learning levels, and for improving efficiency in planning and assessment. Creating an idea bank based on useful AI prompts is one possible development (and teaching faculty how to create good prompts is seen as a likely professional development topic).

Concerns include inherent bias in AI results and the potential loss of “productive struggle” in learning things for the first time. There’s also a question about equity—will all students have access to the same technology? NotebookLM is a tool that Steiner said shows promise for fair and controlled usage by students.

One thing the schools want to be sure of is that teachers and students are clear on when AI is OK to use, and when it is not. Found in the Wellesley High student handbook: “Students may not use an artificial intelligence program to aid their work, or an assignment or test unless explicitly directed to do so by their instructor.” (The handbook also includes rules against using AI to bully others.)

Trach also raised the issue of AI detection tools, and their shortcomings. “We’re trying to move toward teaching academic integrity as a skill,” she said.

Following the presentation, School Committee members and a student rep asked questions. Costas Panagopoulos inquired about using AI to make school administration more efficient, such as managing enrollment or communications. Trach said such efforts have begun, though Steiner acknowledged such “agentic” work would likely be addressed more directly further down the road. Of course, AI capabilities are already finding their way into various IT tools, such as help desk systems, used by the schools.

School Committee Chair Niki Ofenloch sought assurances that AI would be used consistently among faculty. Trach confirmed that such coherence is important, though also cited the fact that “the ground is changing under our feet” in terms of AI tools and developments.

Student rep Alex Budson-McQuilken shared a reminder that there is currently a group of “conscientious objectors to the usage of AI” and suggested that teachers not force students to use the tools. “While many view it as a new and essential skill in the workplace, many students simply for ideological reasons aren’t ready to adopt it yet…” One concern of students Budson-McQuilken raised were the environmental impacts of AI (Steiner said environmental issues have been raised during ongoing discussions).

Trach said: “I want to emphasize that for anyone listening and all of us here, there’s no formal adoption of AI. We are in a learning phase, and while we are trying things out, we are really conscious of offering options…”

She continued: “I do feel [AI] is ubiquitous and I would rather us take hold of this and try to understand it rather than it take ahold of our students and educators and it be improperly used, which is something I worry about…”

- Please send tips, photos, ideas to theswellesleyreport@gmail.com

- Want to support stipends for Swellesley Report student interns? Get in touch: theswellesleyreport@gmail.com